I’m seeing a narrative building in the post OpenClaw / Claude Co-Work world: the future of software is just massive databases (Snowflake, Databricks and friends) with swarms of agents running on top, spinning up custom workflows and UIs on demand.

Why build application companies when you can just conjure bespoke solutions for any workflow and any user?

I don’t buy it. I’ve found it helpful to think about this from the perspective of the buyer (CT/IO of an Enterprise).

The 8 Constants of Enterprise Products

When companies buy application software, they’re not just buying lines of code. They’re buying the below “8 Constants of enterprise products”:

- Reliability from battle-testing

- Repeatability across teams and use cases

- Security that’s been audited

- Brand and someone to blame when things go wrong (“you don’t get fired for buying IBM”)

- Service & maintenance to fix issues as they arise

- Business case that delivers economic value at a cost-profile that makes sense and can be budgeted

- Product roadmap i.e. the product keeping pace with the rate of innovation in the market

- Opinions on how a workflow should actually run, distilled from hundreds of customer deployments

I refer to them as “Constants” because, like physical constants, I don’t believe they change regardless of what the underlying technology does. The last one matters more than people think. It’s my view that while many today are experimenting and creating their own workflow automations with agents, the long tail of enterprise employees don’t want to figure out what they could automate, they’d rather buy something to do a job. What’s more, the way to solve a problem and pressure test the solution may not be obvious. That’s why we buy applications from those who have done the work.

Occam's razor: when code is free, simplicity wins

People assume that if AI drops the cost of code to zero, we’ll drown in it. Infinite code, everywhere, for everything.

But that’s a CTO’s nightmare. More code equals less reliability, harder debugging, systems humans didn’t write and barely understand. Occam’s razor suggests the opposite: the simplest solution wins. When code gets cheap, the power of our systems will increase (the shift to agentic systems of action etc.) but value over time then shifts to efficiency i.e. how much value you can deliver per unit of code.

Balancing inference & code

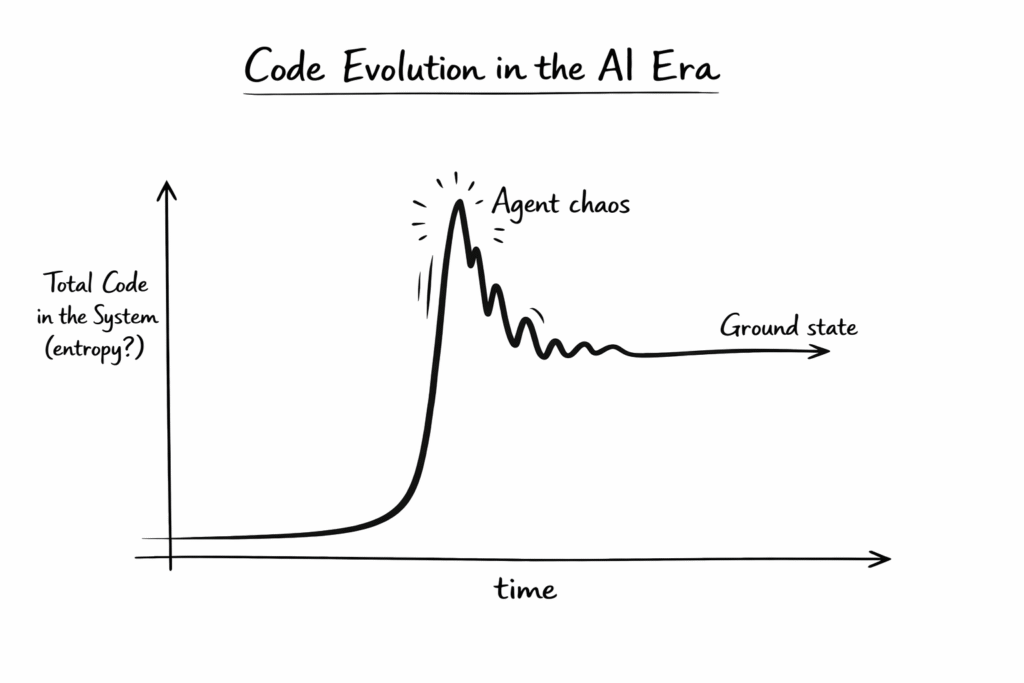

As a physics student I was taught to think in local or global maxima and minima. Right now we’re at a local maximum, with relatively stable code per unit of value. AI will initially spike us up as every company experimenting with agents and custom workflows generates code like crazy. People who have never written code before are getting in on the action with Claude Code.

That initial spike will be an unstable maximum. Over time, patterns will emerge and opportunities for repeatability will crystallise. The chaos of a thousand custom agents will collapse into refined, simple applications and agentic systems that do the same job, much better, in a cost efficient manner…over and over again.

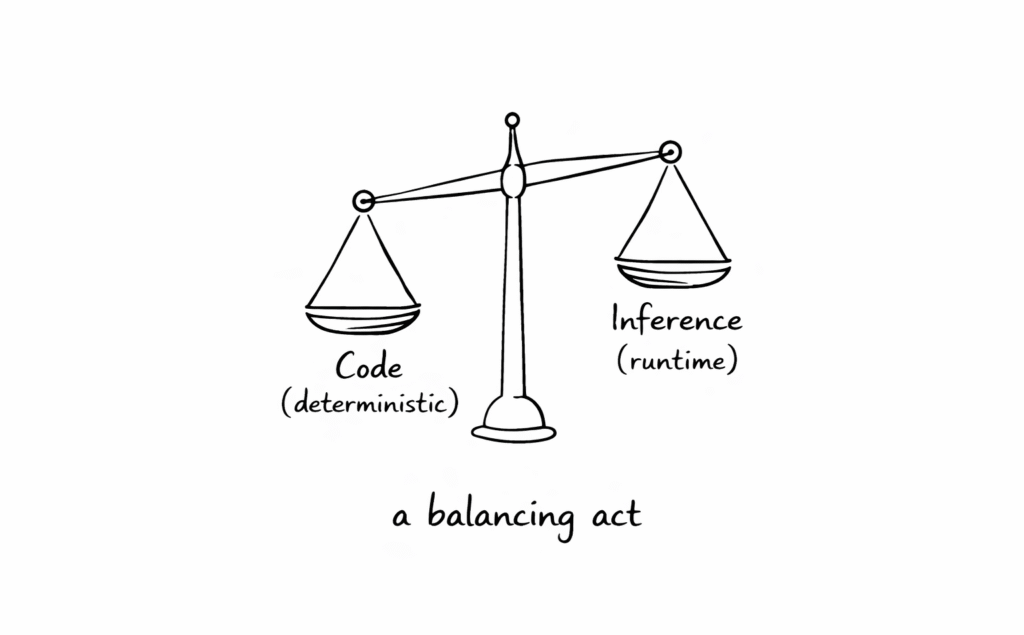

The nuance for CTOs and CIOs will be striking the balance between code (compute at build time) and inference (compute at runtime). Pure inference (agents generating everything on the fly) is powerful but expensive and unpredictable. Pure code (writing everything upfront) is inflexible but cheap and reliable. The equilibrium lives between them.

For example, there are some processes like Payroll, where you don’t want an AI to “infer” the way to do it each time. There is a “right” way to do it, and you don’t want it to be unpredictable, or at a random cost-basis. Therefore, it’s better to codify.

That’s not to say all parts of the process should be deterministic. Inference may thrive in certain parts of the workflow.

Where does value accrue in enterprise solutions?

Enterprise systems like ERPs, CRMs have historically been usability-poor and people only use them via an interface if they really have to. Yet, they command multi-million dollar contracts. The value ascribed to these contracts is not really about usage, or the code itself, therefore. It’s much more about the way they deliver on the 8 Constants above (reliability, repeatability, service … etc). Again, a software business is much more than just code.

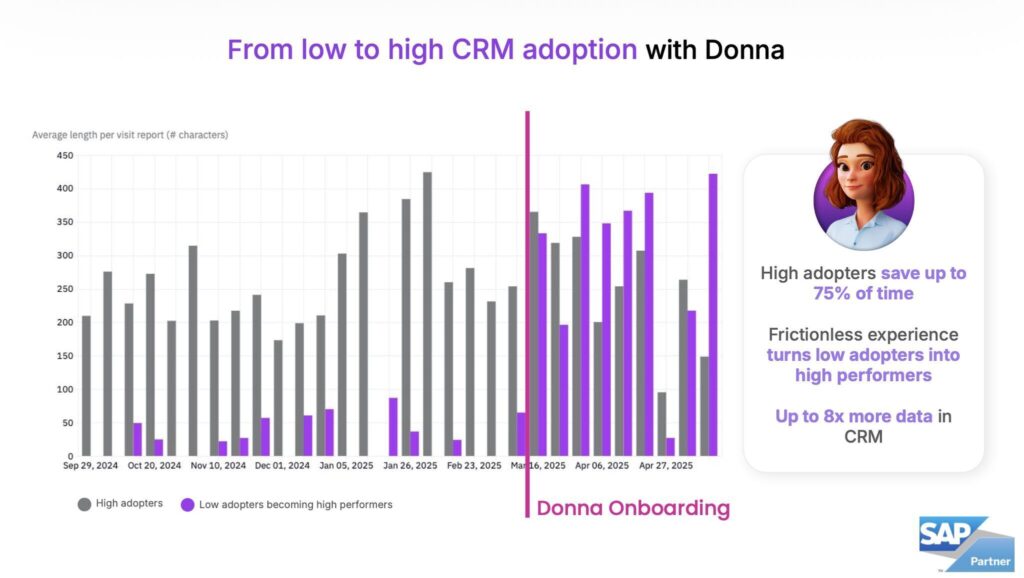

What’s exciting is that AI can let you win on both sides at once. Where legacy systems were reliable but painful to use, AI-native applications can be powerful and actually enjoyable.

We’re already seeing companies deliver on this, like Frontline portfolio company Donna, which has built a multi-modal AI assistant for field sales teams. The chart below shows how organisations running Donna alongside SAP CRM are seeing significant usage increases. Deterministic code gives them the reliability they need; AI inference gives the user experience they actually want.

Will there be more code?

Yes. Software will keep eating the world and AI will accelerate this. The TAM of software keeps expanding. But code efficiency will improve dramatically too. The complexity isn’t more lines of software, it’s deciding where to use code versus where to use inference.

To continue the physics analogy: at the limit, systems settle into the lowest energy configuration that still delivers full value, known as their “ground state”. In other words, future systems will optimise for maximum capability, but minimal waste. The application layer will find that ground state: powerful enough to handle complex workflows agentically, simple enough to be stable and cost efficient.

The chart above demonstrates this transition: an initial spike due to experimentation, followed by a stabilisation as repeatable patterns are distilled and optimised, before settling in a ground state.

Should Enterprises build or buy?

This clicked for me last week talking to the CEO of a non-tech enterprise who said to me: “I’m paying too much for my systems of record and nobody likes using them. I’d love to replace them. Should I build my own or buy? What would you advise?”

In that moment the answer became clearer to me: Buy. 100%.

Sure, he could spin up a team to replicate a CRM’s code in a couple days with Claude et al. But then you have to maintain it, debug it when things go wrong. And more importantly, you can’t keep pace with a vendor whose entire business is that product and to innovate with that product.

If it’s not your main focus you’ll fall behind fast if you try to do this in house today, and will be stuck maintaining your out of date stack instead of growing your core business. I don’t doubt that some tech-forward companies will experiment with DIY systems. But for most enterprises, particularly in non-tech sectors, it won’t be the optimal approach.

So does the future of software look like the past?

Not quite. Actually, not at all. To get the most value of the transition from deterministic applications and systems of records to agentic systems of action, AI workers, and all the future capabilities of AI-native systems, I do think the enterprise tech stack will need to evolve dramatically.

It’s unclear what that looks like exactly, but it feels like some kind of unified data plus business logic layer (to maintain integrity of agentic apps) will be the foundation. On top there will be an application layer, some of which will be custom, sure. But in my view, third-party apps aren’t going anywhere. CTOs/CIOs will want to purchase from AI vendors whose core business is to solve the job they are hiring for in the first place. This will be the solution that solves for cost-adjusted-value (outsource to someone who knows what they’re doing), ensures reliability and accountability (someone to blame), and provides the optionality to swap vendors in and out if needed.

The application layer strengthens, not weakens

In a world where everyone can generate code, the winners will be companies selling “we figured this out already, and our solution is more powerful, but cost optimised and reliable than what you could build yourself”.

For founders this means the opportunity is in finding those natural, energy-efficient, repeatable states. Workflows that collapse into elegant simplicity. Patterns every company needs but shouldn’t rebuild. Application companies that nail these equilibrium points where code and inference balance perfectly, and can wrap it in the “8 Constants” that Enterprises actually buy, will capture outsized value.

Of course, there are no right answers here, so I’m always happy to debate this topic with others via Linkedin or email.